VR is everywhere with a broad variety of industrial application, and the potential to revolutionize many others. The potential of virtual reality technology is endless and drives digital transformation

VR is an innovation that’s been around since the 90’s and - even though companies are more and more preoccupied by digital trust - VR is now widely used in the professional area. It is transforming today, and gives everyone with an engineering background the possibility to shape tomorrow. Thanks to virtual reality, engineers and developers can predict and solve issues before they happen.

Now, let’s dive into what VR in engineering really entails:

- What is virtual reality?

- Important concepts of Virtual Reality

- What hardware does virtual reality use?

- How VR impacts today’s world

- Applications of Virtual Reality in engineering

- Advantages and disadvantages of VR

- VR features that can boost engineering

- ROI of VR

- How to become a Virtual Reality engineer

What is virtual reality?

Definition of virtual reality

Virtual reality (VR) is a computer-generated simulation where the user, in full immersion, can interact with the virtual environment. The viewer sees the virtual world through a virtual reality headset or a projection-based device (powerwall or immersive room), and interacts with the 3D objects with controllers.

In the real world, we use our different senses to perceive our environment. Virtual reality mostly relies on visual and hearing inputs (and sometimes touch through haptics) to emulate the sensation of presence, and make you believe you really are in the virtual environment.

What is the difference between virtual reality and augmented reality?

Virtual and augmented reality are different. They are two sides of the same coin:

- Augmented reality works by overlaying virtual objects in the real world. You see virtual objects through the lens of 3D glasses or your smartphone.

- Virtual reality works by immersing you in a virtual environment. This environment exists apart from the current physical reality

AR and VR can both rely on the same sensors and headsets, but they don’t serve the same purpose.

History of VR

Virtual reality history is not exactly a recent one. The technology is built upon ideas that date back to the 1800’s. Many inventors wanted people to get “inside” stories, or experience simulated environments through sensory sensations. However, many of the inventions failed because the technologies virtual reality relies upon (like computer graphics, processors, internet bandwidth, 3D rendering …) were not invented yet, or were not performant enough.

The idea of being immersed in a created universe isn’t new. The term “virtual reality” was created by Jaron Lanier in 1987, founder of VPL Research, as he was inventing the first VR gear (including semi-immersive virtual reality glasses and tracking gloves). The 90’s were the first time virtual reality became popular, as well as viable for industry use and research purposes. But it was still too expensive and lacked the proper technologies to properly tricking the brain into believing the virtual simulation was actually “real’.

Today’s virtual reality owes a great deal to the inventors who paved the way for high-end VR equipment, yet accessible for many use cases. Of course, the technology is still evolving, and the specific applications can be still very costly.

17 important virtual reality concepts

We have long held the desire to escape the limitations of a computer screen, keyboard and mouses. With virtual technologies, we are understanding more and more how to create more intuitive ways to get human-machine interactions (HMI), also called human factors integration. VR is a new form of communication with machines

Before you can delve further into VR in engineering, it is important to grasp some core concepts about virtual reality. Here’s a little glossary of VR concepts you should know.

1. Computed Aided Design (CAD) and Computer Aided Engineering (CAE)

Computed Aided Design (CAD) and Computer Aided Engineering (CAE) software are programs used for engineering and design that helps creating parts or complete virtual prototypes. CAD and CAE software are now benefiting from advantages of virtual reality systems in terms of visual collaboration, which helps reducing the time and resources engaged in product development.

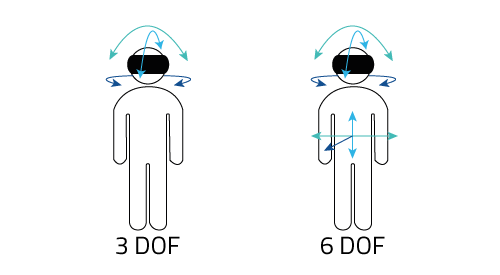

2. Degree of Freedom (DoF)

In mechanics, the degree of freedom is the minimal number of independent variables required to define the position of an object in space. In other words, the DOF determines the number of directions in which an object can move. In 3D, these variables are

- the angle from the planes XY, XZ and YZ. (also known as pitch, yaw, and roll)

- the distance from origin of the objects in the x, y and z axis

When you are immersed in a virtual environment, you can either be in a fixed position (like sitting), or moving around. 3-DoF headsets track only rotational movements, which means it will register if you look left or right, up or down and pivot your head in different angles. 6-DoF headsets also allow us to track translational motion, which means it allows you to physically move into the virtual space: forward, backwards, sideways and even up and down.

For short, 3-DoF headsets like Google Cardboard, Oculus Go or Samsung Gear VR are best suited to consume 360-degree content. If you are working with 3D models, and need to more around 6-DoF headsets such as Oculus Rift, Oculus Rift or HTC Vive Pro are better for your use case. And if you need even more powerful head mounted headsets, check-out Pimax 8K or Varjo VR-3.

3. Dollhouse view

When you are watching a 3D model totally zoomed out, usually from a top-down view. It enables VR engineers and designers to take a look at a (large scale) CAD model, and see how it should look like once it’s entirely built.

4. Eye-tracking

It’s a process used by some headsets to keep track of the user’s gaze. This information can give accurate information on what the viewer is looking at, and for example give a focus on one virtual object or another, furthering the immersion.

5. Field of View (FoV)

The field of view is the number of degrees visible from a certain point of view. The human’s FOV is generally 200° (binocular vision only covers about 120°). When it comes to VR FOV, it is limited by the lenses, and to get a broader field of view you need either bigger lenses (so a bigger headset) or lenses closer to the eyes (and it might cause headaches). Most headsets in the market have 100-120° headsets, but most vendors are working towards expanding the FOV capabilities of their HMD.

6. Frame rate (fps)

A frame rate is the frequency at which an image (or frame) is replaced by another on the screen. This is how you can have the illusion of movement. A low frame rate will give the impression of chopped movements, and a high frame rate will give smooth movements. 60 fps means you have 60 images displayed in 1 second. Frame rates depends on your CPU (Central Processing Unit) and GPU (Graphics Processing Unit).

In order to prevent the users from getting motion sickness and headaches, VR needs to maintain a high frame rate (at least 90 fps).

-1-1.jpeg?width=863&height=409&name=20180607_141404_retouch%C3%A9e-(4)-1-1.jpeg)

7. Haptics

In order to provide feedbacks to the users, by physically simulating their interactions with the virtual environment. For example, it can be a vibration effect on the controllers. Using other wearable gears like gloves can further the experience. With the use of haptics, VR closes even more the gap between the real world and the virtual world, by adding the sense of touch to the completely immersive experience.

8. Head tracking

Head tracking consists on tracking the position and orientation of the user’s head. This information allows the point of view to follow the user’s movements in the virtual world. In the context of full immersion, head tracking is essential to give the users a complete freedom of will and a realistic and interactive virtual reality experience.

9. Immersion vs presence

Two concepts often appear through VR articles and papers: immersion and presence. Immersion in a VR system depends on sensory immersion, which is “the degree which the range of sensory channel is engaged by the virtual simulation” (Kim and Biocca 2018). Presence is the perceptual illusion the user has of being in the virtual reality: they react to the changes in the virtual world, but they cognitively know this is not reality.

These two terms are really close, and they are often used interchangeably. Most of the times, papers refer to immersion as the objective level of sensory fidelity and to presence for the subjective…

To be noted that a high level of immersion is not necessarily good for collaborating in VR. Being able to see your real body while experiencing VR (for instance with a Powerwall or in a CAVE) - using a body tracking software - is less disorienting, reduces the risks of bumping into another VR user.

10. Inside-out tracking vs outside-in tracking

Inside-out and outside-in tracking are two different approaches to motion tracking in the real world to replicate them in virtual reality:

- Inside-out tracking uses the sensors placed inside of the VR. The cameras on the headset will record fixed points in your real surroundings and use as reference points for your movements. Markerless inside-out tracking is also possible and uses positional tracking of objects, but it lacks accuracy despite being much cheaper.

- Outside-in tracking use an external tracking system (with cameras or lighthouses) to simulate the “virtual box” where the user is. It is very accurate but doesn’t track anything outside the line of sight of the sensors.

11. Judder

Judder (or smearing) is the manifestation of motion blur and the perception of more than one image simultaneously. It is caused by a low refresh rate or dropped frames. Judder may cause simulator sickness.

12. Latency

The latency is the delay between the input (visual or auditory) and the output (through the screen, earphones, haptics). It is mostly a technical problem that should resolve itself as technology is improving. High latency can cause VR sickness, though due to the unnatural feeling of your brains knowing you’re interacting with the virtual environment, but not receiving the correct information.

13. Metaverse

These days, many people are using the terms “virtual reality” and “metaverse” as if they are the same. But it is not the case. The Metaverse is a concept that has yet to be precisely defined while Virtual Reality is a technology that exists since the 90’s.

Theoretically, the metaverse is a network of interconnected virtual worlds in which you can explore, interact and create content. Each world may have their own rules, but a single user can freely move from one world to another. The concept of metaverse used to be just science fiction, but we might be close to having one in the coming years.

![]()

14. Monoscopic vs stereoscopic videos

Monoscopic videos (180° and 360°) are the easiest to produce for consumer VR. Basically, you have 1 image directed to both eyes that are stitched together to give the illusion of being inside a cylinder or a sphere. However, the image seems completely flat, but it makes it possible to watch it on another device like a computer screen (using drag and drop to navigate) or a smartphone (using motion sensors).

On the other hand, stereoscopic 3D content displays two sets of images (one for each eye). This type of content resembles much more the way se see the real world, as each eye gets a slightly different angle of the same information (just like our own eyes do). This enables the brain to get a sense of depth in 360° content. Stereoscopic videos can only be watched on HMDs.

15. Resolution

The resolution (of a screen most of the times), is the degree of details an image has depending on its number of pixels. High resolution means more pixels to render more details. Of course, it will also depend on the size of the screen. A low-resolution image will seem detailed enough on a small screen (for instance a smartphone), but will immediately be pixelized or blurry in a larger screen.

On a VR device, the image resolution often results on a lower level of details, because the surface on which the content is rendered appears much larger than on a flat rectangular screen, which means the image is stretched on a wider area. Also note that for stereoscopic view, each eye will only see half of the actual resolution.

16. Refresh rate

Refresh rate is the number of times your monitor can redraw the image on the screen. Just as the frame per rate, the refresh rate can cause latency. The refresh rate is very important for a realistic virtual environment. It is measured in Hertz (Hz). Your refresh rate should match your frame per second, otherwise the images displayed by your monitor will not match the images generated by your computer.

17. Room-Scale VR

It is a sub-category of VR that implies that the VR environment is the size of a room. Instead of a stationary VR experience, they can walk around a “box of freedom” in which they can freely navigate. Most of headsets recommend a minimal space of 2 meters by 2 meters for a comfortable virtual reality experience. For security purposes, some headset ask you to place a security grid (a guardian on Oculus quest), and free your ground surface from any obstacles.

What Hardware Does Virtual Reality Use?

The autostereoscopic device

Autostereoscopic devices cover a family of screens that display stereoscopic images without the use of special headgear or glasses. The binocular perception of 3D effect can be produced through different technologies such as parallax barrier or lenticular array. These devices are inherently the easiest to set-up. As such, they are well-suited for commercial spaces with a lot of foot traffic. On the flip side, they are also the type of devices with the least immersive experience.

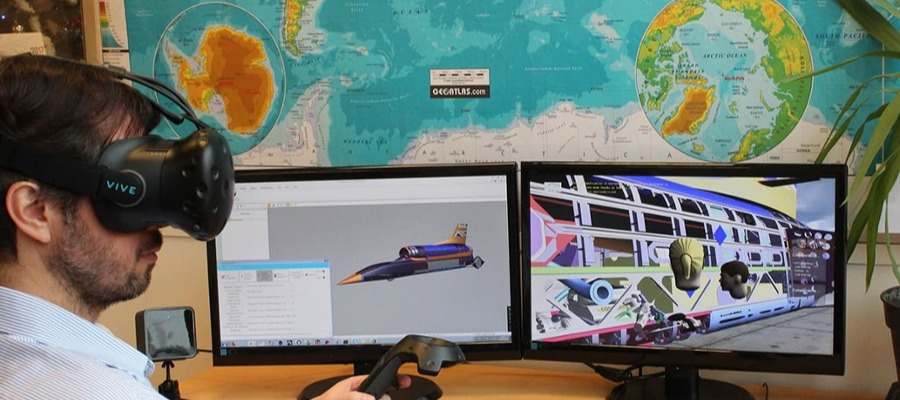

The head-mounted display (HMD)

The head mounted display (HMD) refers to the virtual reality headset. It is basically an inbuilt display of lenses before a screen the size of a smartphone. It has the form of a helmet (or googles sometimes), and can be strapped around your head for comfort. There are three types of headsets to consider for VR:

- Smartphone-powered headsets, which are entry-level headsets, mostly for 3-DOF applications

- Standalone or all-in-one headsets that are limited in use

- Tethered or pc-powered headsets who rely on a high-end computer system

If you are not sure about which type of headset to use in your business, check-out our guide on VR headset for industry use.

We also select top VR headsets for engineer each year. See our comparatives for best VR headset:

- Top VR headset for engineers in 2020

- Top VR headset for engineers in 2021

- Best AR/VR headsets for engineers in 2022

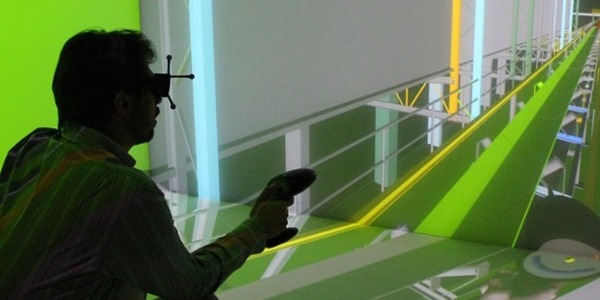

The immersive room (or CAVE)

A CAVE (cave automatic virtual environment), is a projection-based display for virtual environment made of at least two screens. The users wear 3D goggles with stereoscopic view that enable them to be completely immersed in the virtual experience. They are more immersive than a Powerwall, and are more suited than virtual headset to collaborate on large-scale 3D models with a high image quality.

The drawback of an immersive room is the steep price and the space requirement. You will at least need 500K to set up an installation. For VR first-timers, trying a smaller system for your use case might be a good idea.

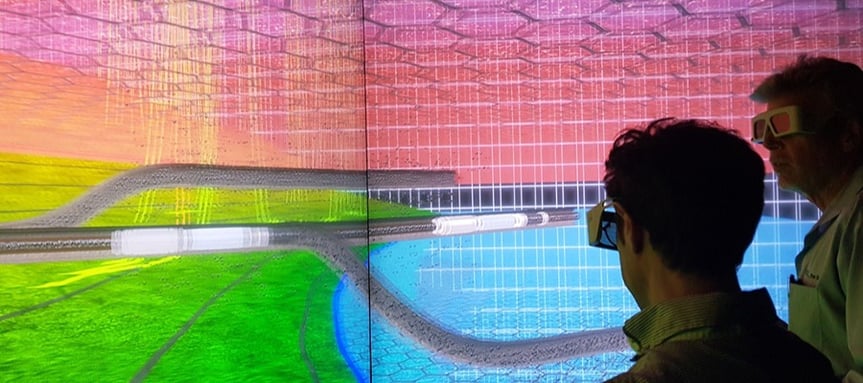

The powerwall

The PowerWall is a projection-based VR display system comprised of one or several screens, that display two slightly different set of images to the viewer. The users need special 3D glasses that shutter in sync with the images to enjoy three-dimensional view. Powerwalls are less immersive than virtual reality headsets, but they are better suited for promoting teamwork, as the users are not in full immersion, and can communicate easier with coworkers.

How VR impacts today’s world – especially VR engineering

Is virtual reality just a hype?

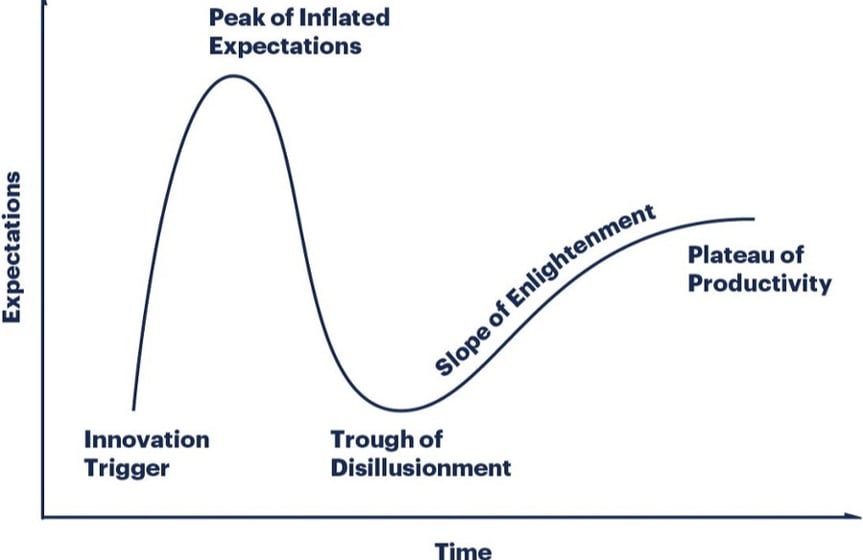

Since virtual reality has started to make itself known, back in the 90’s, it was immediately placed on the Gartner Hype cycle. But the hype cycle merely classifies emerging technologies according to their degree of attention. In other words, the hype cycle quantifies the buzz around new technologies. So, what about the virtual reality hype?

If you take a look at all the hype cycles, VR has always been there, and most often in the “Valley of disappointment”, that it never seemed to leave. But today’s improved processors, and the birth of affordable HMDs, virtual reality experienced a revival. The difference is that VR isn’t just a niche sector for big industrial groups. Now, companies with smaller sizes can afford virtual reality hardware and software. So, is VR just a hype? Not really, but it still needs more than a few success stories from early adopters to really take off.

How is virtual reality shaping today's work?

Now that VR got affordable, more and more companies are adapting their workplace for VR collaboration. And that has become necessary in today’s world, when many workers have to work from home. However, with complex projects, working from a distance may not be possible with videoconferencing tools such as zoom, skype or teams. By immersing people in a virtual world, it will open new possibilities like reviewing the design of a product, iterate and make decisions around a technical issue, and promote a better teamwork.

By 2025, virtual reality will have completely reshaped the way we work, especially for remote settings. Of course, many companies still have to adapt, because there’s a gap between their expectations and efficient VR-based work sessions. However, the main areas you should focus on to keep the quality of work both in the virtual and real world are:

- Giving your employees the right equipment (VR hardware and computer)

- Training the workers to use the VR technology

- Choosing the right VR engineering software for your use case

What are the applications of VR in engineering?

Since VR has become an important part of business, let’s review the main use cases that are currently covered by using a VR software for engineers.

1. Create a virtual prototype for a product

Virtual prototypes are one of the most valuable tools for industrial designers and VR engineers. Virtual reality software optimize the conception phase of product development by:

- Reducing the number of iterations

- Optimizing prototyping-related costs (allocated time, money and resource)

- Enhancing the immersion

2. Run a project review in virtual reality

Design reviews are necessary in many projects, in order to seek feedbacks and correct assumptions from your teams, other departments or even customers. It enables to put everyone on the same page about the project. Many companies rely on CAD models to convey design ideas, but it is a limited tool. Running a project review in virtual reality enables you to display and interact with your 3D data in scale 1:1, which will be cheaper than a physical prototype, and more intuitive than looking at a CAD software on a computer screen.

For more information, check-out these 6 essential steps for a project review in VR.

3. Collaborate with workers all around the world

Teamwork is a challenge for companies that have employees working remotely, or subsidiaries in different countries. To answer their needs, virtual reality offers the possibility to create shared digital workspaces where engineers from different locations can work on the same CAD model in real-time. Collaboration in VR enhances communication between teams and accelerates the design-to-market processes.

4. Conduct ergonomics studies

Ergonomic analysis can be very useful, especially when designing inner spaces. Whether we are talking about a building or a vehicle, it is important to know how the future customers will react to the design. For example, with a car, you want to know if they can reach every command and have a good visibility. With virtual reality, optimizing the ergonomics can be done in an instant, and reduce costs and time-to-market.

5. Evaluate risks for maintenance operators

Before sending technicians or engineers in a potentially dangerous installation, it is important to give them proper training to assess risks and safety or the workers. Virtual reality helps you set up training for situations that are otherwise too dangerous or too expensive to reproduce in real conditions.

6. Visualize and interact with complex data

Sometimes, flat rendering is not enough to fully visualize and interpret complex sets of data. Interactive virtual reality helps you see and analyze phenomena that would otherwise be invisible to the naked eye.

7. Assess and design workstations

In the context of the digital factory and Industry 4.0, virtual reality is a key technology to optimize, simulate and engineer a production line, and interact with it in collaboration. With the 3D model of the workstation (or the entire manufacturing line), virtual reality can be a powerful tool to assess the operator’s safety and well-being and the workstation ergonomics. These studies can be further optimized with the use of a digital twin and a full body tracking gear to visualize the operator in action, both in the real world and in the virtual world.

8. Train specific skills in a virtual environment

Some training use cases involve scenarios that are too expensive, too dangerous or impossible to do in the real world. Virtual reality training offers a safe space to develop both soft skills and hard skills – even when workers are not in the same location.

Please note that “classic” VR training might not be enough for training specialized skills. For this use case you will need a VR system tailored to your needs.

What are advantages and disadvantages of virtual reality in engineering?

The key benefits of VR for engineering

Understand your designs better

There is no substitute for being immersed in your own 3D model at scale 1:1. You can walk inside the construction you’re about to build or drive a car that has yet to be produced. Plus, there’s no need to be an expert to understand the virtual objects you’re displaying in VR, which makes it a great asset to win more projects.

Anticipate issues at the early stages of conception

Compared to traditional desktop-based design methods, with 3D models displayed on a computer screen, spotting design errors in VR is way more intuitive and efficient. It helps VR engineers and designers anticipate design problems and prevent future performance issue.

Improve product and process quality at launch

Because VR solutions help you visualize design errors sooner and more efficiently, you will avoid costly rework. Better communication around potential issues mean greater feedbacks by your teams and all the stakeholders involved in the project, thus leading to a higher quality of the design and of the entire project.

Collaborate better on the same VR model

Virtual reality engineering allows every collaborator to visualize the 3D CAD model at the right scale and interact with the data in real time. Coordination between engineering, design and production teams will increase tremendously thanks to VR collaboration.

Reduce time to market

Virtual reality dramatically speeds up all business processes with fast-paced iterations at the design and prototyping phase and optimized process for production, distribution, maintenance and even for training the operators.

Save money and resources

Virtual prototyping allows virtual reality engineers and designers to test multiple concepts at a fraction of the price: no resources spent on building said prototypes and limited labor cost. Changes can be made, other concepts can be tested or starting over are all options without making huge investments, which is a great way to minimize risks when launching a new product.

Virtual reality also allows remote collaboration and assistance, which reduces the need to fly specialists around your different locations.

Increase efficiency thanks to VR tools

Virtual reality tools help you analyze and share ideas with your team with features such as:

- Creating bookmarks and notes

- Taking measurements

- Sketching on the model

- Hiding and showing parts

- Recording videos

- Exporting reports

- Etc.

Learn better and faster

Virtual reality is very intuitive, especially for people who are used to entertainment and gaming concepts. Various industries who tried virtual learning can attest that VR makes the concepts easier to understand and retain. The individual learning curve is steeper and learning and more profound and longer lasting.

Drawbacks of virtual reality for engineering

Hardware investment costs

The ROI of VR is high. However, depending on your use case, the investment costs can vary a lot:

- A few HMD cost between $300 to $900 per headset

- A powerwall costs on average 6K$ to 15K$ (also depending on your installation)

- An immersive room can be installed at a minimum price of 500K$

Impacts on the human body

When using VR, especially with older devices or for long periods of time, people can experience physical problems such as headaches, eye strain, dizziness or motion sickness. Fortunately, there are ways to avoid it in your professional use.

All the VR features and technology that can boost engineering

3D content visualization

Imagine being able to visualize your brand-new product or project before it is even built. All you need is the 3D model and a virtual reality software that can display it. The choice of the right software is crucial, as it is very dependent on your particular use case.

An advanced use case for 3D content visualization is when you want to use 3D content from different application in the same virtual environment, or if you want to test 2D interfaces while displaying your 3D model.

3D collisions in virtual reality

Remember these games you played when suddenly your character goes through a piece for the décor, or sometimes through another character? This is an example of (bad) 3D collisions. Collision detection is an essential aspect of AR / VR simulation and has multiple applications from verifying measurements, testing different constraints on the model or running simulation with real physics.

Cloud technology and virtual reality

What if you did not need to have a powerful PC at hand to work in virtual reality? That’s what cloud VR is all about. VR cloud enables you to rely on cloud services for all the computing operations before streaming the 3D content to an end user’s headset.

Human factor integration

When you want to design a product or make ergonomic studies for products used by humans, then the human factors must be integrated in the design process. This can be done either by the user wearing tracking devices or with humans simulated at scale 1:1 in the 3D model.

1. Virtual manikin

A Virtual manikin is a puppet that imitates an operator in real time in the virtual environment. This feature enables to visualize the human-machine interactions, with one or several workers.

2. Full body tracking in VR

What if you could experience VR with your whole body? Human Body Tracking (or motion tracking) is a technology that enables you to collect data about the user’s posture and movement through external devices. One of the main use cases of full body tracking is ergonomic studies.

For instance, TechViz is compatible with various motion capture and body-tracking devices such as TEA, ART, X-Sens, Teslasuit…

![]()

3. Hand tracking in VR

Hand tracking is a specific use case of body tracking where you are focusing on tracking the user’s hand. This can be done through an external device, or done natively by the head-mounted device. The use of VR gloves for hand-tracking provides you with haptics and/or force-feedback.

4. Finger tracking in VR

Finger tracking is another specific use case of body tracking. You will need it if you have a need to track each finger individually, or when you need controller-free VR and haptics.

Point clouds in virtual reality

VR point clouds is a is a set of data points in space you can visualize in VR. They are generally produced by 3D scanner applications, and you can use them to feature

Virtual manufacturing assembly

The Virtual Assembly feature uses triangle to triangle intersection to detect 3D collisions. It allows users to detect that virtual objects have intersected and even recorded a path with the different collision points to be reloaded for further studies. An ideal solution for project reviews, maintenance, and training sessions.

Looking for a personalized feature?

You created a functionality in your 3D application and want to display it in VR? Thanks to TechViz TVZLib API, our VR engineering software allows developers to program their own functionalities for their 3D application.

What is the ROI of using VR in business?

Virtual reality can adapt to many use cases and better process in your company, but what is the return on investment (ROI) of engineering VR? Many benefits are drawn from VR for engineering, like saving time and money at different stages of product development. But the gains are not just quantitative, but also qualitative like improving process and product quality, fostering innovation mindset and refining the worker’s skills.

How to deploy VR in your company

More and more engineers are relying on Augmented Reality (AR) and Virtual Reality (VR) in their jobs. But there are many ways to introduce enterprise virtual reality into your organization. One of the main questions being on-premise versus SaaS (Software as a Service).

How to become a Virtual Reality engineer

Augmented reality (AR) and virtual reality (VR) are two innovative technologies that are in high demand today. This is why the demand for augmented and virtual reality engineers is exploding. It may have started in the gaming industry, but it now has spread into various industries. This evolution opened the door to countless business opportunities for engineers and developers.

If you are already working with CAD models, becoming a VR engineer is fairly easy. There are multiple plug-ins that interface seamlessly with the CAD software you already use, bringing most of the benefits of VR with very low time and financial investment. Your first investment will be a VR headset.

To become a true VR expert, there will obviously be a whole different set of skills to master, starting with programming languages. Virtual reality often uses C, C++ for development, and there are a few libraries available with tools and features to integrate VR through programming. You may probably need to be familiar with IDE (integrated development environment) such as Unity and Unreal 3D engines. If that fits your profile, don’t hesitate to apply for a job at TechViz! We are always looking for highly professional and passionate experts to help us grow our solutions.

Back to Blog

Back to Blog